I Set the Direction. I Let AI Find the Path.

SGLTN.co

EXECUTION

AI • Design Leadership • Strategy

PRODUCT SPACE

B2B SaaS • Insurtech • Workflow Management

ROLE

Head of Product Design

Athena was the foundation that allowed us to scale product development predictably and efficiently.

Project gallery

The Model

Most AI-in-design conversations focus on the middle: the execution tasks that AI accelerates. That framing undersells both what designers actually contribute and what AI actually enables.

The more accurate picture…

Human: Define the Problem

Frame the right question

Identify whose problem it is

Set constraints and success criteria

Recognize what's missing from the brief

Decide what's worth solving at all

AI: Connect the Execution Dots

Research synthesis at scale

Pattern detection across data

Component and code generation

Solution options humans may not reach

Documentation and specs

First-draft communications

Analogous pattern exploration

Human: Direct the Solution

Evaluate AI-generated options

Apply taste and judgment

Decide what gets built

Validate against real user needs

Own the final design direction

The critical addition: AI doesn't just execute faster, it also expands the solution space. It surfaces options, combinations, and patterns that a designer working alone wouldn't encounter. The human role isn't threatened by that; it's elevated. You need better judgment to evaluate a wider option set, not less.

More

How This Plays Out in Practice

Three examples that show the model in action — and reveal that AI plays different roles depending on where the designer's friction actually is.

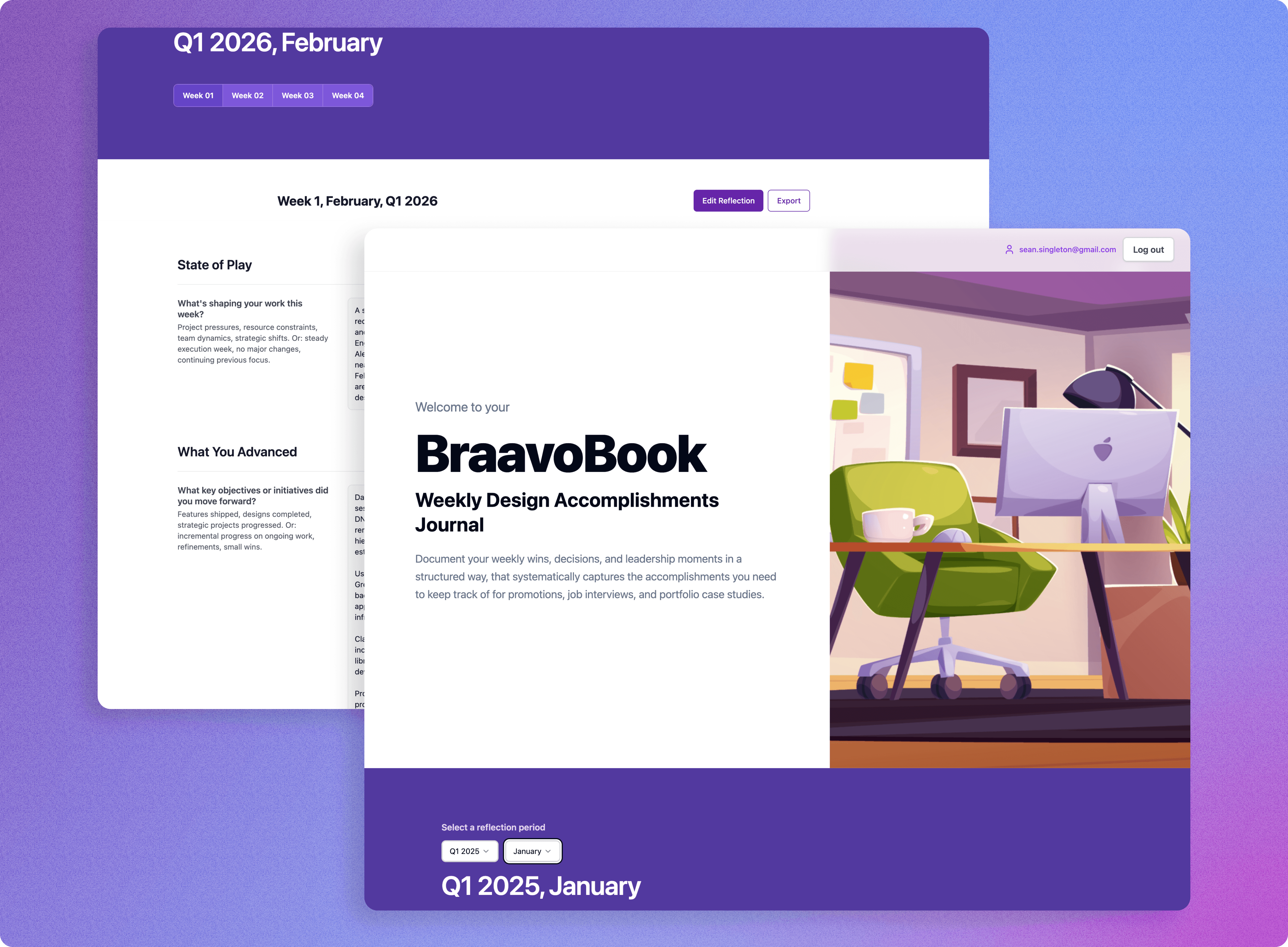

BraavoBook: A Product I Built Because I Needed It

The clearest demonstration of how I work — because I was my own first user.

Problem I defined:

Design leaders forget their best work. The bottleneck isn't memory — it's not knowing which weeks matter until it's too late to document them well. I had this problem personally. I recognized it as a design problem. So I built the solution.

What AI does in between

Analyzes weekly journal entries and scores them for career story potential. Synthesizes Granola meeting notes into structured reflections. Surfaces patterns across entries I couldn't see myself. Flags evidence to capture immediately, suggests narrative angles, maps work to portfolio positioning.

What AI found I wouldn't have

Identified an unsolicited prototype I built as a high-value career asset — I had mentally filed it as a side experiment. AI scored it 'High' story value before I'd consciously framed it that way. The tool saw the signal before I did.

Solution direction I own

The seven-section reflection framework, the decision to build this as a weekly practice vs. a portfolio tool, the product positioning, and what gets surfaced vs. stays private.

Why it belongs here

BraavoBook is both a personal project and a product born from lived experience, validated on myself first. It's also a proof of concept. Ai allows me to build a tool that operationalizes the exact philosophy described in this case study.

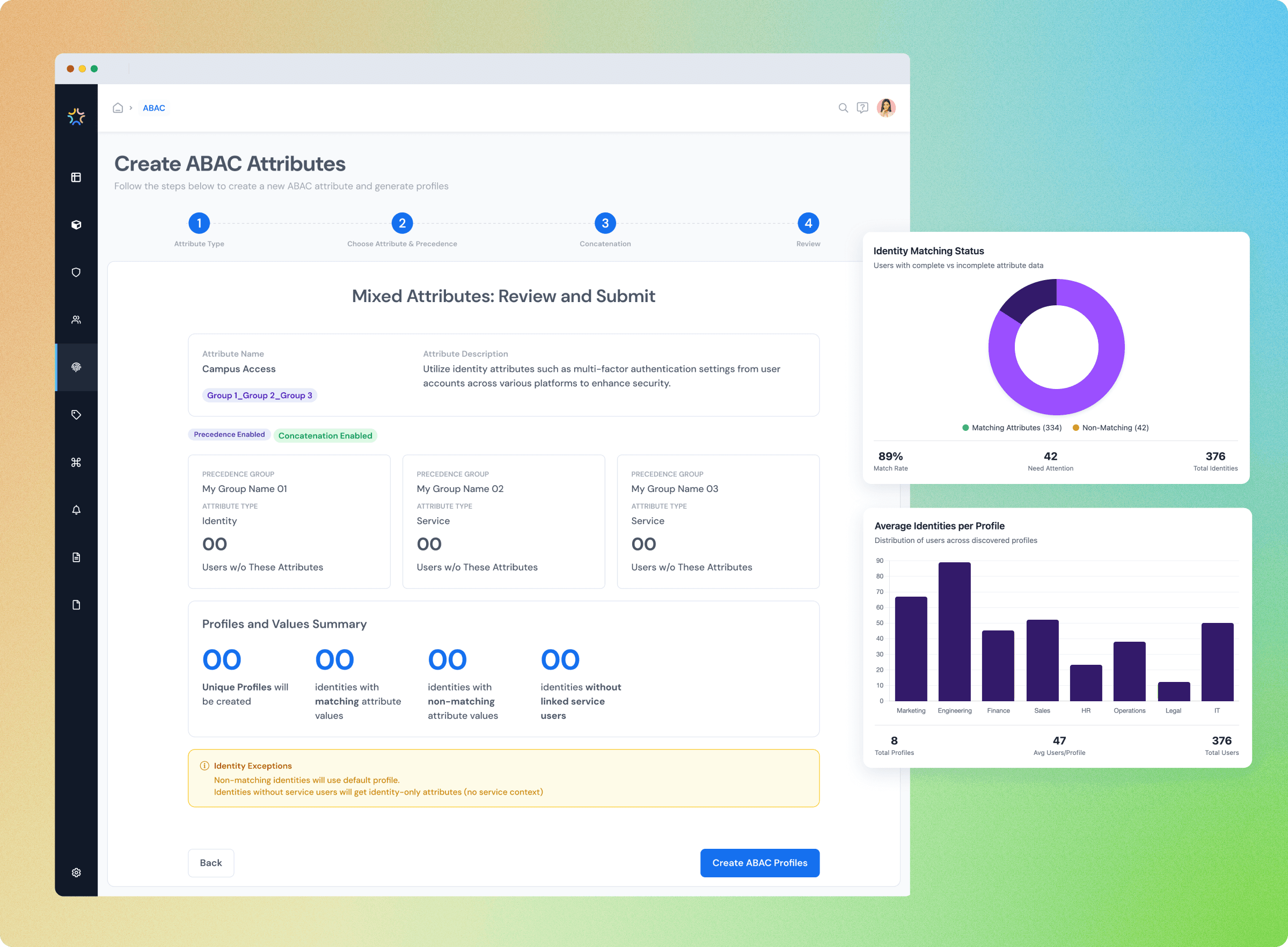

Building a 0 → 1 IGA Security Feature: AI as Domain Abstraction Layer

AI plays a different role when the designer's friction is domain complexity, not execution volume.

Problem I defined

Attribute-Based Access Control is technically dense — policy engines, permission logic, entitlement hierarchies. Designing good UX required engaging with engineering as an informed collaborator. The learning curve was the constraint.

What AI does in between

Compressed domain learning dramatically — synthesizing technical documentation, PRD generation, initial wireframes, and prototype scaffolding. Synthesized user research and review notes. Let me engage with the problem at the right level without weeks of domain ramp-up.

What AI found I wouldn't have

Surfaced UX patterns from adjacent technical domains (developer tooling, permission management) that engineering wouldn't have suggested and I wouldn't have reached through standard competitive research.

Solution direction I own

Recognizing which technical complexity deserved to surface in the UI vs. be abstracted away. Deciding what the user actually needed to understand to do their job. The judgment calls about where ABAC's power was worth exposing.

The key mode

AI as domain abstraction layer — lowering the floor so design judgment could engage sooner. Outcome: ABAC shipped and drove 189% pipeline growth.

End User Portal: AI as Execution Multiplier at Scale

When the designer already understands the domain deeply, AI's role shifts entirely.

Problem I defined

The End User Portal served two very different personas — an admin/manager with governance responsibilities and an employee with a simple, intuitive access experience. 20+ user stories across seven feature sets. One designer.

What AI does in between

Handled the volume of execution: generating user stories, building out end-to-end interactive prototypes for both personas, synthesizing review notes across multiple rounds, generating component variations.

What AI found I wouldn't have

Identified interaction asymmetries between the two personas that weren't obvious from the user stories alone. These were places where the admin's mental model and the employee's mental model would likely conflict at the UI level.

Solution direction I own

The persona definitions, the experience principles for each role, and the decisions about where the two flows needed to feel identical vs. where differentiation was the right design answer.

The key mode

AI as execution multiplier — raising the ceiling on what one designer could ship. The domain was intuitive on both sides; the constraint was scale. AI handled the volume so judgment could govern the whole.

What This Means for Design in Practice

If AI connects the execution dots, the design team's premium shifts. Three implications for how I'd build and lead a practice:

AI plays different roles depending on where designer friction lives

The IGA Security projects used the same philosophy but AI did different work in each. When friction is domain complexity, AI lowers the floor, compressing learning curves so design judgment can engage sooner on technical problems. When friction is execution scale, AI raises the ceiling, handling volume so one designer can govern a much larger scope. Knowing which mode you're in, and deploying AI accordingly, is itself a design leadership skill.

Hire not only for execution craft alone, but for problem definition and solution evaluation.

The designers who thrive in this model have sharp problem framing instincts and strong critical evaluation skills. They can assess a wide AI-generated option set and recognize what's right. This is different from the execution-focused hiring lens most design orgs still use. Taste, judgment, and domain fluency become the primary axes.

Design the AI's role explicitly, not by default

Most teams let AI usage emerge organically. Generally, whoever adopts it fastest will set the norms. A stronger approach is designing AI's role as deliberately as you'd design any other part of the process: where does it enter, what does it produce, who evaluates it, and what does it never touch. That's a design leadership decision, not a tooling decision.

TBH…

Operating as the sole designer at an early stage B2B SaaS company means execution constraints are real. Leaning on AI let me hold the design leadership work: the problem definition, stakeholder alignment, system thinking - and still shipping at the speed the company needed.

In this instance, the constraint sharpened the model. When you can't afford to spend your judgment on execution, you get very clear about where your judgment actually belongs